In my blog post entitled Future Of Media, I’d daydreamed about a robot journalist:

My imaginary Robot Journalist (RoJo) is equipped with a camera, sensors, and an LLM (Large Language Model), and is dropped in the middle of warzone or any other theater of action. The camera and sensors will gather unlimited inputs from Ground Zero. The LLM will process these inputs in real time, backed by the unlimited treasure trove of pretrained content it possesses. Ergo, RoJo can produce extremely comprehensive stories very rapidly and without the constraints of context and deadline faced by human journalists.

At the time, I’d called RoJo a “figment of my imagination”.

It no longer is.

Since I published that post a year ago, I have come across at least two examples of AIish journalism.

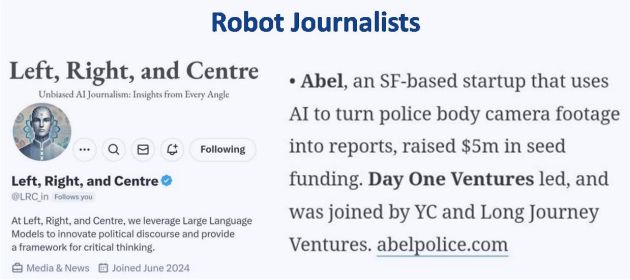

1. Left, Right, and Centre

Left, Right, and Centre bills itself as a provider of “Unbiased AI Journalism” with “Insights from Every Angle”. I promptly called out its resemblance with RoJo on X fka Twitter.

@s_ketharaman: Unbiased AI Journalist @LRC_in

Left, Right, and Center comes close to my vision of Robot Journalist. Especially the part about “Every Angle”.

2. Abel

San Francisco startup Abel comes close to my vision of Robot Journalist, just in law enforcement.

In general, the concept of robot journalism seems to be gaining traction. According to a recent edition of EYE FOR AI newsletter from FORTUNE magazine, there are so many robot journalists in the world by now that AI researchers have started conducting tests to compare them with human journalists.

… recently a group of AI researchers in Japan and Taiwan created a benchmark called NEWSAGENT to see how well LLMs (aka RoJo) can do at actually taking source material and composing accurate news stories.

The study arrived at the following conclusions:

- RoJo did a good enough job in most cases

- Open AI GPT 4-o did better on metrics like objectivity and factual accuracy

- Human-written stories emphasized factual accuracy

- Alibaba open weight model, Qwen-3 32B, did best stylistically

- RoJo consistently outperformed human-written stories.

Presumably perturbed by the results, the human journo raises the following objection:

The problem here is that in the real world, factual accuracy is the bedrock of journalism. If the models fall down on accuracy, they should lose in every case to the human-written stories, even if evaluators preferred the AI-written ones stylistically.

Based on that, the human journo argues:

Computer scientists should not be left to create benchmarks for real world professional tasks without deferring to expert advice from people working in those professions.

This is quiet lame for at least two reasons:

It’s one thing for a journo to presume that their readers care about factual accuracy and another thing for their readers to acknowledge that they actually do.

It’s one thing for a journo to presume that their readers care about factual accuracy and another thing for their readers to acknowledge that they actually do.- Likewise, it’s one thing for a journo to claim that their work is factually accurate and another thing for their readers to agree that it actually is.

In the modern age of subscription-based journalism, I have doubts on both points, for reasons stated in my blog post entitled Why Media Can’t Be Neutral.

Besides, this is like Indian H1B techies claiming that America runs only because of them, or Chinese AI chip manufacturers like Huawei claiming that their AI chips are as good as Nvidia’s RTX Pro 6000D and H20.

End of the day, journos produce content, readers consume it. Journos may undergird their writings on factual accuracy but only their readers get to decide whether the average article written by present human journos is really factually accurate, and how much importance they give to factual accuracy in the first place.

If researchers need to ask for anyone’s opinion, it’s the readers’.